Market momentumĬhief rival Google is also using emerging AI techniques to improve the language-translation quality across its service. For reference, OpenAI’s GPT-3, one of the world’s largest language models, has 175 billion parameters.

Microsoft is also working to train a 200-billion-parameter version of the aforementioned benchmark-beating model. In machine learning, parameters are internal configuration variables that a model uses when making predictions, and their values essentially - but not always - define the model’s skill on a problem. In August, Microsoft said that a Z-code model with 10 billion parameters could achieve state-of-the-art results on machine translation and cross-lingual summarization tasks.

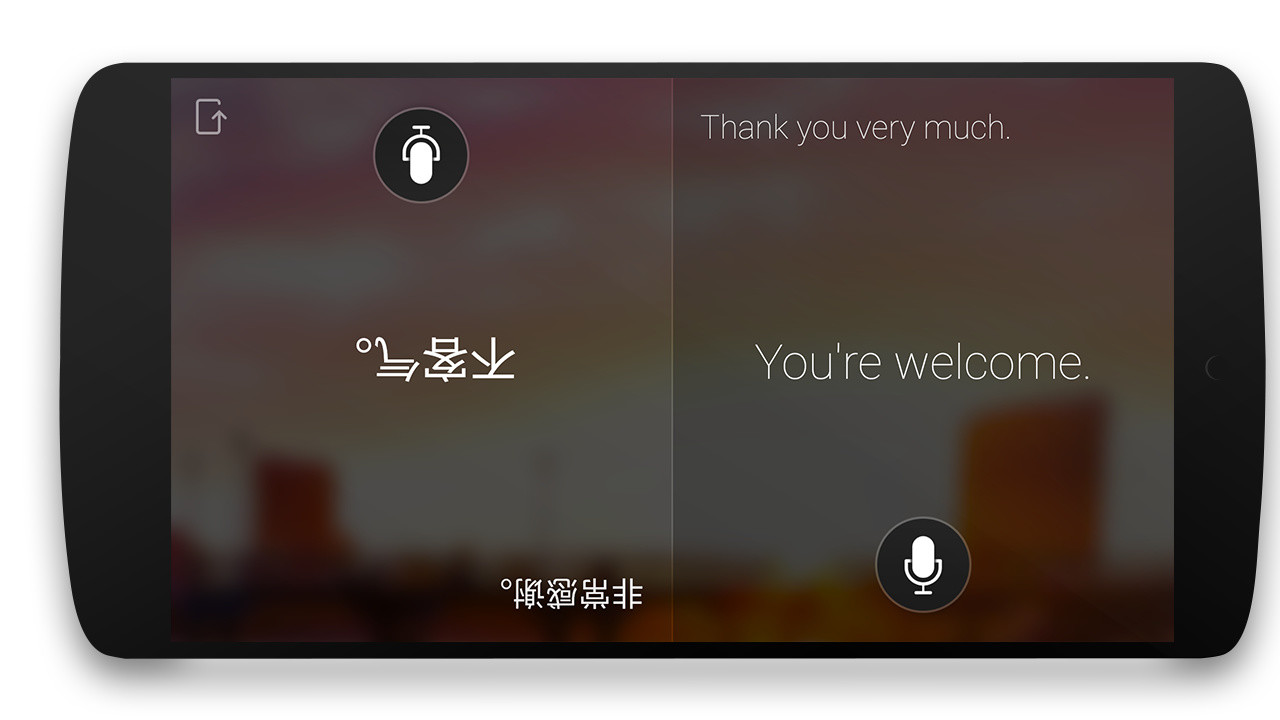

For example, the models’ translation skills (“machine translation”) are used to help improve their ability to understand natural language (“natural language understanding”). Another round of training transfers knowledge between translation tasks. Z-code language models are trained multilingually across many languages, and that knowledge is transferred between languages. Additionally, Common Voice, Mozilla’s effort to build an open source collection of transcribed speech data, has vetted only dozens of languages since its 2017 launch. Efforts beyond Microsoft illustrate the magnitude of the problem - the Masakhane project, which aims to render thousands of languages on the African continent automatically translatable, has yet to move beyond the data-gathering and transcription phase. But until recently, even the state-of-the-art algorithms lagged behind human performance. Techniques like neural machine translation, rewriting-based paradigms, and on-device processing have led to quantifiable leaps in machine translation accuracy. (Like all models, Microsoft’s learn from examples in large datasets sourced from a mixture of public and private archives.) Approximately 1,500 known languages fit this criteria, which is why Microsoft developed a multilingual translation training process that marries language families and language models. With Z-code, Microsoft is using transfer learning to move beyond the most common languages and improve translation accuracy for “low-resource” languages, which refers to languages with under 1 million sentences of training data. Because of the sharing of linguistic elements across similar languages and transfer learning, which applies knowledge from one task to another related task, Microsoft claims it managed to dramatically improve the quality and reduce costs for its machine translation capabilities. Z-code provides the framework, architecture, and models for text-based, multilingual AI language translation for whole families of languages. The team comprises a group of scientists and engineers who are part of Azure AI and the Project Turing research group, focusing on building multilingual, large-scale language models that support various production teams. Powering Translator’s upgrades is Z-code, a part of Microsoft’s larger XYZ-code initiative to combine AI models for text, vision, audio, and language in order to create AI systems that can speak, see, hear, and understand. MetaBeat will bring together thought leaders to give guidance on how metaverse technology will transform the way all industries communicate and do business on October 4 in San Francisco, CA.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed